AI/ML Foundations

PyTorch from the Ground Up

Tensors, autograd, training loops, and model saving. The complete workflow from first tensor to deployed model.

You've built linear regression from scratch with NumPy: manual gradients, manual weight updates, manual everything. That understanding matters. But implementing everything by hand for complex models means spending more time debugging array shapes than solving problems.

PyTorch1 bridges the gap between "understand the math" and "build real systems." It feels like NumPy with GPU acceleration and automatic differentiation built in. You experiment and debug like regular Python (no static graph compilation), and the same code you write for experiments works in deployment.

Tutorial Goals

- PyTorch Tensors — GPU-accelerated arrays for all AI computation

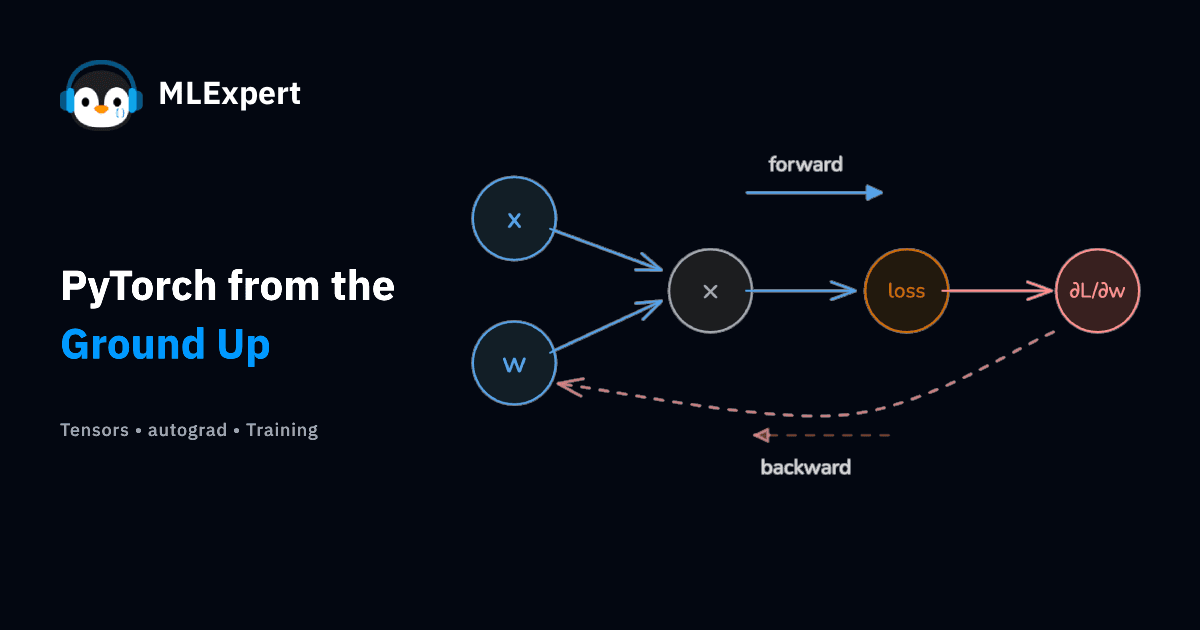

- Autograd — automatic gradient calculation via the chain rule

- nn.Module — the standard way to define and organize models

- Complete training workflow — data loading, optimization, evaluation

- Model saving and loading with safetensors